Is it possible for an AI to be trained just on data generated by another AI? It might sound like a harebrained idea. But it’s one that’s been around for quite some time — and as new, real data is increasingly hard to come by, it’s been gaining traction.

Anthropic used some synthetic data to train one of its flagship models, Claude 3.5 Sonnet. Meta fine-tuned its Llama 3.1 models using AI-generated data. And OpenAI is said to be sourcing synthetic training data from o1, its “reasoning” model, for the upcoming Orion.

But why does AI need data in the first place — and what kind of data does it need? And can this data really be replaced by synthetic data?

The importance of annotations

AI systems are statistical machines. Trained on a lot of examples, they learn the patterns in those examples to make predictions, like that “to whom” in an email typically precedes “it may concern.”

Annotations, usually text labeling the meaning or parts of the data these systems ingest, are a key piece in these examples. They serve as guideposts, “teaching” a model to distinguish among things, places, and ideas.

Consider a photo-classifying model shown lots of pictures of kitchens labeled with the word “kitchen.” As it trains, the model will begin to make associations between “kitchen” and general characteristics of kitchens (e.g. that they contain fridges and countertops). After training, given a photo of a kitchen that wasn’t included in the initial examples, the model should be able to identify it as such. (Of course, if the pictures of kitchens were labeled “cow,” it would identify them as cows, which emphasizes the importance of good annotation.)

The appetite for AI and the need to provide labeled data for its development have ballooned the market for annotation services. Dimension Market Research estimates that it’s worth $838.2 million today — and will be worth $10.34 billion in the next ten years. While there aren’t precise estimates of how many people engage in labeling work, a 2022 paper pegs the number in the “millions.”

Companies large and small rely on workers employed by data annotation firms to create labels for AI training sets. Some of these jobs pay reasonably well, particularly if the labeling requires specialized knowledge (e.g. math expertise). Others can be backbreaking. Annotators in developing countries are paid only a few dollars per hour on average without any benefits or guarantees of future gigs.

A drying data well

So there’s humanistic reasons to seek out alternatives to human-generated labels. But there are also practical ones.

Humans can only label so fast. Annotators also have biases that can manifest in their annotations, and, subsequently, any models trained on them. Annotators make mistakes, or get tripped up by labeling instructions. And paying humans to do things is expensive.

Data in general is expensive, for that matter. Shutterstock is charging AI vendors tens of millions of dollars to access its archives, while Reddit has made hundreds of millions from licensing data to Google, OpenAI, and others.

Lastly, data is also becoming harder to acquire.

Most models are trained on massive collections of public data — data that owners are increasingly choosing to gate over fears their data will be plagiarized, or that they won’t receive credit or attribution for it. More than 35% of the world’s top 1,000 websites now block OpenAI’s web scraper. And around 25% of data from “high-quality” sources has been restricted from the major datasets used to train models, one recent study found.

Should the current access-blocking trend continue, the research group Epoch AI projects that developers will run out of data to train generative AI models between 2026 and 2032. That, combined with fears of copyright lawsuits and objectionable material making their way into open data sets, has forced a reckoning for AI vendors.

Synthetic alternatives

At first glance, synthetic data would appear to be the solution to all these problems. Need annotations? Generate ’em. More example data? No problem. The sky’s the limit.

And to a certain extent, this is true.

“If ‘data is the new oil,’ synthetic data pitches itself as biofuel, creatable without the negative externalities of the real thing,” Os Keyes, a PhD candidate at the University of Washington who studies the ethical impact of emerging technologies, told TechCrunch. “You can take a small starting set of data and simulate and extrapolate new entries from it.”

The AI industry has taken the concept and run with it.

This month, Writer, an enterprise-focused generative AI company, debuted a model, Palmyra X 004, trained almost entirely on synthetic data. Developing it cost just $700,000, Writer claims — compared to estimates of $4.6 million for a comparably-sized OpenAI model.

Microsoft’s Phi open models were trained using synthetic data, in part. So were Google’s Gemma models. Nvidia this summer unveiled a model family designed to generate synthetic training data, and AI startup Hugging Face recently released what it claims is the largest AI training dataset of synthetic text.

Synthetic data generation has become a business in its own right — one that could be worth $2.34 billion by 2030. Gartner predicts that 60% of the data used for AI and analytics projects this year will be synthetically generated.

Luca Soldaini, a senior research scientist at the Allen Institute for AI, noted that synthetic data techniques can be used to generate training data in a format that’s not easily obtained through scraping (or even content licensing). For example, in training its video generator Movie Gen, Meta used Llama 3 to create captions for footage in the training data, which humans then refined to add more detail, like descriptions of the lighting.

Along these same lines, OpenAI says that it fine-tuned GPT-4o using synthetic data to build the sketchpad-like Canvas feature for ChatGPT. And Amazon has said that it generates synthetic data to supplement the real-world data it uses to train speech recognition models for Alexa.

“Synthetic data models can be used to quickly expand upon human intuition of which data is needed to achieve a specific model behavior,” Soldaini said.

Synthetic risks

Synthetic data is no panacea, however. It suffers from the same “garbage in, garbage out” problem as all AI. Models create synthetic data, and if the data used to train these models has biases and limitations, their outputs will be similarly tainted. For instance, groups poorly represented in the base data will be so in the synthetic data.

“The problem is, you can only do so much,” Keyes said. “Say you only have 30 Black people in a dataset. Extrapolating out might help, but if those 30 people are all middle-class, or all light-skinned, that’s what the ‘representative’ data will all look like.”

To this point, a 2023 study by researchers at Rice University and Stanford found that over-reliance on synthetic data during training can create models whose “quality or diversity progressively decrease.” Sampling bias — poor representation of the real world — causes a model’s diversity to worsen after a few generations of training, according to the researchers (although they also found that mixing in a bit of real-world data helps to mitigate this).

Keyes sees additional risks in complex models such as OpenAI’s o1, which he thinks could produce harder-to-spot hallucinations in their synthetic data. These, in turn, could reduce the accuracy of models trained on the data — especially if the hallucinations’ sources aren’t easy to identify.

“Complex models hallucinate; data produced by complex models contain hallucinations,” Keyes added. “And with a model like o1, the developers themselves can’t necessarily explain why artefacts appear.”

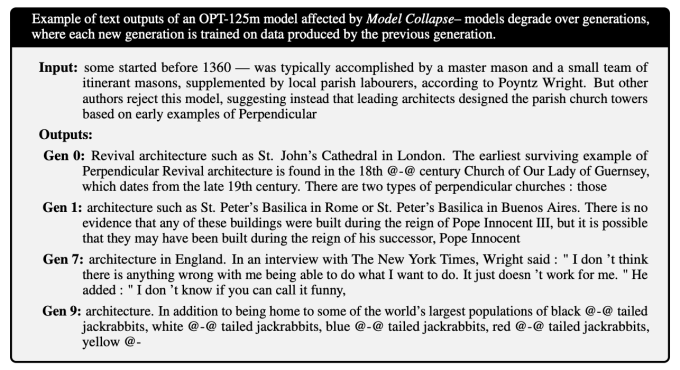

Compounding hallucinations can lead to gibberish-spewing models. A study published in the journal Nature reveals how models, trained on error-ridden data, generate even more error-ridden data, and how this feedback loop degrades future generations of models. Models lose their grasp of more esoteric knowledge over generations, the researchers found — becoming more generic and often producing answers irrelevant to the questions they’re asked.

A follow-up study shows that oher types of models, like image generators, aren’t immune to this sort of collapse:

Soldaini agrees that “raw” synthetic data isn’t to be trusted, at least if the goal is to avoid training forgetful chatbots and homogenous image generators. Using it “safely,” he says, requires thoroughly reviewing, curating, and filtering it, and ideally pairing it with fresh, real data — just like you’d do with any other dataset.

Failing to do so could eventually lead to model collapse, where a model becomes less “creative” — and more biased — in its outputs, eventually seriously compromising its functionality. Though this process could be identified and arrested before it gets serious, it is a risk.

“Researchers need to examine the generated data, iterate on the generation process, and identify safeguards to remove low-quality data points,” Soldaini said. “Synthetic data pipelines are not a self-improving machine; their output must be carefully inspected and improved before being used for training.”

OpenAI CEO Sam Altman once argued that AI will someday produce synthetic data good enough to effectively train itself. But — assuming that’s even feasible — the tech doesn’t exist yet. No major AI lab has released a model trained on synthetic data alone.

At least for the foreseeable future, it seems we’ll need humans in the loop somewhere to make sure a model’s training doesn’t go awry.

Leave a Reply