The immense and quickly advancing computing requirements of AI models could lead to the industry discarding the e-waste equivalent of more than 10 billion iPhones per year by 2030, researchers project.

In a paper published in the journal Nature, researchers from Cambridge University and the Chinese Academy of Sciences take a shot at predicting just how much e-waste this growing industry could produce. Their aim is not to limit adoption of the technology, which they emphasize at the outset is promising and likely inevitable, but to better prepare the world for the tangible results of its rapid expansion.

Energy costs, they explain, have been looked at closely, as they are already in play.

However, the physical materials involved in their life cycle, and the waste stream of obsolete electronic equipment … have received less attention.

Our study aims not to precisely forecast the quantity of AI servers and their associated e-waste, but rather to provide initial gross estimates that highlight the potential scales of the forthcoming challenge, and to explore potential circular economy solutions.

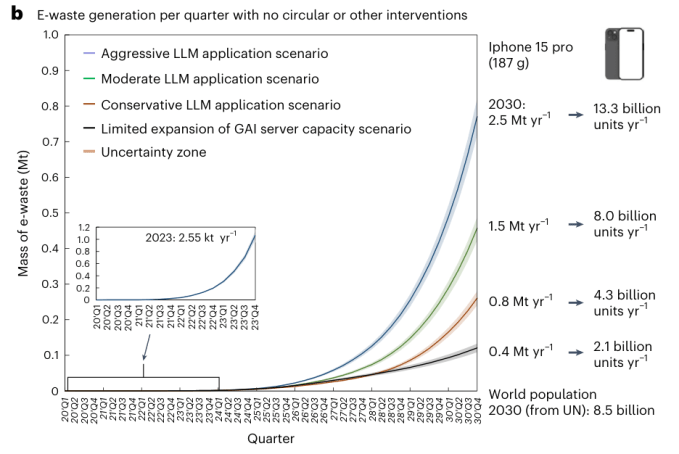

It’s necessarily a hand-wavy business, projecting the secondary consequences of a notoriously fast-moving and unpredictable industry. But someone has to at least try, right? The point is not to get it right within a percentage, but within an order of magnitude. Are we talking about tens of thousands of tons of e-waste, hundreds of thousands, or millions? According to the researchers, it’s probably toward the high end of that range.

The researchers modeled a few scenarios of low, medium, and high growth, along with what kinds of computing resources would be needed to support those, and how long they would last. Their basic finding is that waste would increase by as much as a thousandfold over 2023:

“Our results indicate potential for rapid growth of e-waste from 2.6 thousand tons (kt) [per year] in 2023 to around 0.4–2.5 million tons (Mt) [per year] in 2030,” they write.

Now admittedly, using 2023 as a starting metric is maybe a little misleading: Because so much of the computing infrastructure was deployed over the last two years, the 2.6 kiloton figure doesn’t include them as waste. That lowers the starting figure considerably.

But in another sense, the metric is quite real and accurate: These are, after all, the approximate e-waste amounts before and after the generative AI boom. We will see a sharp uptick in the waste figures when this first large infrastructure reaches end of life over the next couple years.

There are various ways this could be mitigated, which the researchers outline (again, only in broad strokes). For instance, servers at the end of their lifespan could be downcycled rather than thrown away, and components like communications and power could be repurposed as well. Software and efficiency could also be improved, extending the effective life of a given chip generation or GPU type. Interestingly, they favor updating to the latest chips as soon as possible, because otherwise a company may have to, say, buy two slower GPUs to do the job of one high-end one — doubling (and perhaps accelerating) the resultant waste.

These mitigations could reduce the waste load anywhere from 16 to 86% — obviously quite a range. But it’s not so much a question of uncertainty on effectiveness as uncertainty on whether these measures will be adopted and how much. If every H100 gets a second life in a low-cost inference server at a university somewhere, that spreads out the reckoning a lot; if only one in 10 gets that treatment, not so much.

That means that achieving the low end of the waste versus the high one is, in their estimation, a choice — not an inevitability. You can read the full study here.

Leave a Reply